AI statement: No AI was used for this article.

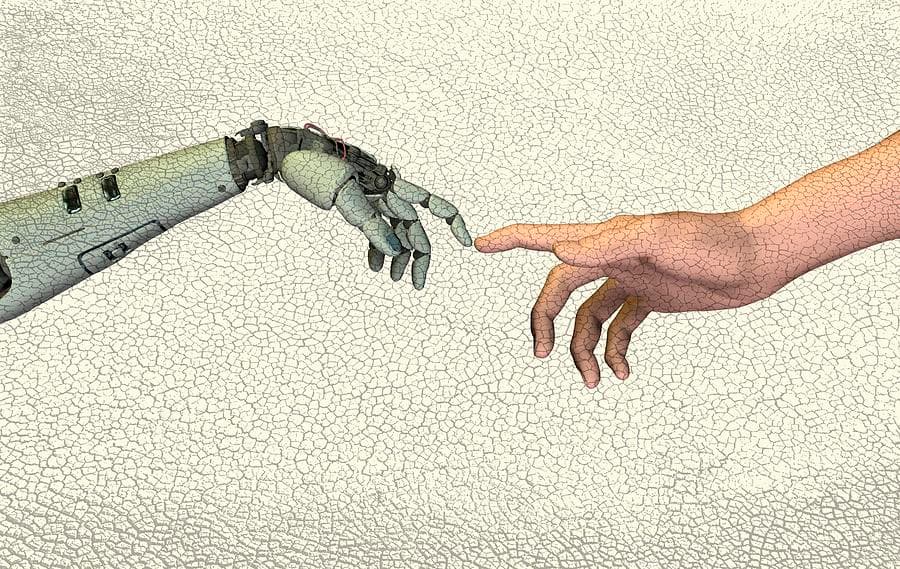

An investigation into how generative AI is challenging Minerva University's pedagogical mission and the shifting landscape of academic assessment.

In founding the institution, Ben Nelson set out to change higher education completely. He saw too many universities acting like "certification factories" and wanted to build a school that took the science of learning seriously. As generative AI changes education at all levels, this constantly evolving institution finds itself at the forefront of tackling these new challenges.

The Reality of AI Use

Assessing the current state of AI use among students is notoriously difficult, as reports tend to be inaccurate due to the fear of self-incrimination. However, data from end-of-semester surveys across all Fall 2024 cornerstones (MC50, EA50, FA50, CX50) provides a starting point.

- Preparing for Class: The most frequent use case, with over 40% of students in some cornerstones using AI to get ready for sessions.

- Improving Assignments: Roughly 25-30% of students reported using AI for this purpose, though faculty members like Professor Terrana suspect the actual number is significantly higher.

- Pre-class Work: Approximately 15-20% of students utilize AI to complete preparatory tasks.

- Answering Polls: The least common use, reported by fewer than 5% of students.

The AI Statement Dilemma

Currently, the university requires students to include AI statements with their work. However, Professor Terrana notes that "no one is happy with the system." The current incentive structure often discourages honesty: students who are transparent about AI use risk being downgraded for "bad use," while those who do not report it may avoid scrutiny entirely.

The Academic Standard Committee (ASC) is tasked with handling plagiarism, but it lacks the resources to investigate every concern. Professors often resort to emailing students directly about suspicious work rather than formal reporting, which prevents the university from seeing a full picture of recurring issues.

Defensive vs. Offensive Strategies

Faculty members are debating whether to teach productive AI use (offensive) or limit its use as much as possible (defensive). This has led to several shifts in course requirements:

- Editing History: In courses like AH111, students must share Google Docs with full editing history to prove text wasn't simply copy-pasted.

- Technical Interviews: Math, physics, and CS courses (like CS114) are increasingly using live interviews or video recordings to verify student understanding.

- Assessment Changes: Grading for prep polls is being phased out, and questions are being redesigned to be "ChatGPT-unfriendly."

A Shift in Incentives: The 5K vs. The Olympics

Professor Hadavand (Head of Capstone) argues that academia must reconsider assessment entirely. He compares high-stakes grading to the Olympics, where the pressure to win (or get a high GPA) incentivizes "doping" or cheating.

He suggests that learning should be more like a local 5K run:

- Personal Growth: Competing with yourself rather than others.

- Community: Being part of a group with shared goals.

- Learning as the Outcome: Prioritizing the acquisition of knowledge over the metric of a grade.

"As an educator, learning, and not grades, is the outcome... GPAs are metrics for employers to filter good workers. Assessment is the employers' problem, and they need to figure out creative ways to find talent." — Professor Hadavand

When students rely on LLMs to solve problem sets, they lose the arena where they can practice and learn. This risks turning the university back into the "certification factory" it was designed to replace.

Author